|

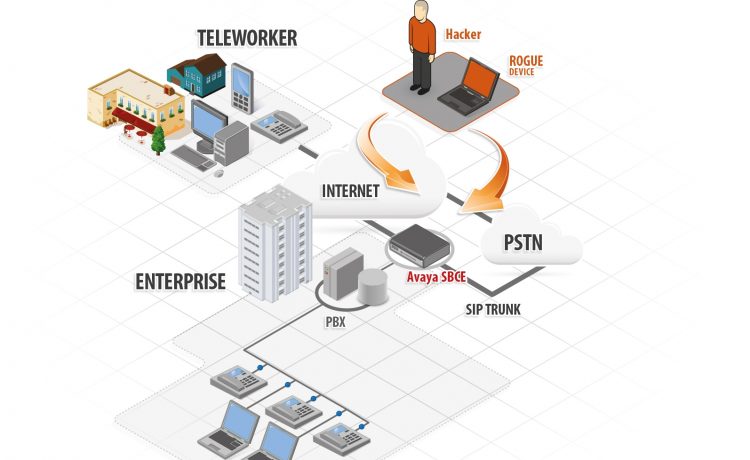

In the rapidly evolving landscape of communication technology, businesses are increasingly reliant on unified communication systems to streamline their operations. To ensure smooth and secure communication across various networks and devices, Enterprise Session Border Controllers (ESBCs) play a vital role. SBCs act as intermediaries between different communication entities, providing advanced security, interoperability, and session control mechanisms. In the era of digital connectivity, ensuring robust security measures is paramount. Enterprise SBCs act as the first line of defense, safeguarding communication networks from various threats. By employing encryption protocols, SBCs protect sensitive data and prevent unauthorized access. They also offer features such as access control, authentication, and fraud detection to mitigate risks. SBCs employ session border control mechanisms to monitor and regulate communication sessions, preventing malicious activities such as denial-of-service (DoS) attacks and toll fraud. Additionally, SBCs provide network topology hiding, concealing internal network details from external entities, thereby reducing the exposure to potential threats. Enterprises often rely on diverse communication platforms, such as Voice over IP (VoIP), video conferencing, and instant messaging. Enterprise Session Border Controller play a critical role in enabling seamless connectivity between these platforms by acting as protocol translators. They facilitate interoperability by converting signaling and media protocols, allowing different communication systems to communicate effectively. SBCs also provide transcoding capabilities, converting media streams between various codecs to ensure compatibility. Moreover, SBCs manage NAT traversal, enabling smooth communication between private and public networks, regardless of network address translation challenges. This capability is particularly crucial for remote and mobile workers, ensuring uninterrupted communication across different network environments. Efficient call routing is essential to optimize communication workflows. Enterprise SBCs offer advanced routing capabilities that enhance the flexibility and efficiency of communication within an organization. Enterprise Session Border Controller can perform intelligent routing based on predefined rules, such as load balancing, least cost routing, or quality of service requirements. They can also route calls based on specific parameters like time of day, caller ID, or destination. This ensures that communication resources are utilized optimally and that calls are directed to the most appropriate destinations. Additionally, SBCs support dynamic routing updates, allowing real-time adjustments to accommodate changing network conditions and call traffic patterns. Network performance plays a crucial role in ensuring high-quality communication experiences. SBCs contribute to optimizing network performance by implementing various mechanisms. They perform call admission control, monitoring the availability of network resources and ensuring that only authorized calls are admitted, thus preventing network congestion. SBCs also employ Quality of Service (QoS) mechanisms, prioritizing real-time communication traffic over non-real-time data, resulting in improved call quality and reduced latency. Furthermore, Enterprise Session Border Controller can implement media anchoring, where media streams are processed closer to the source, reducing the load on the network and enhancing overall performance. Over the course of the projection period, increasing 5G technology adoption will create a number of growth possibilities in the worldwide unified communications market. The cloud computing industry has seen substantial changes as a result of the development of 5G technology. Low latency connectivity offered by 5G enables more fluid communications. The adoption of 5G will probably result in frictionless file transfers, uninterrupted, high-quality video conferencing options, and a seamless cloud service experience. As a result, the development of 5G technology is projected to increase demand for Unified Communications, which will open up numerous potential prospects in the market for unified communications globally. In the rapidly evolving landscape of enterprise communication, Session Border Controllers (SBCs) are instrumental in unlocking next-level communication capabilities. By enhancing security, enabling seamless connectivity, facilitating advanced routing capabilities, and optimizing network performance, SBCs empower businesses to achieve efficient and secure communication across diverse platforms.

0 Comments

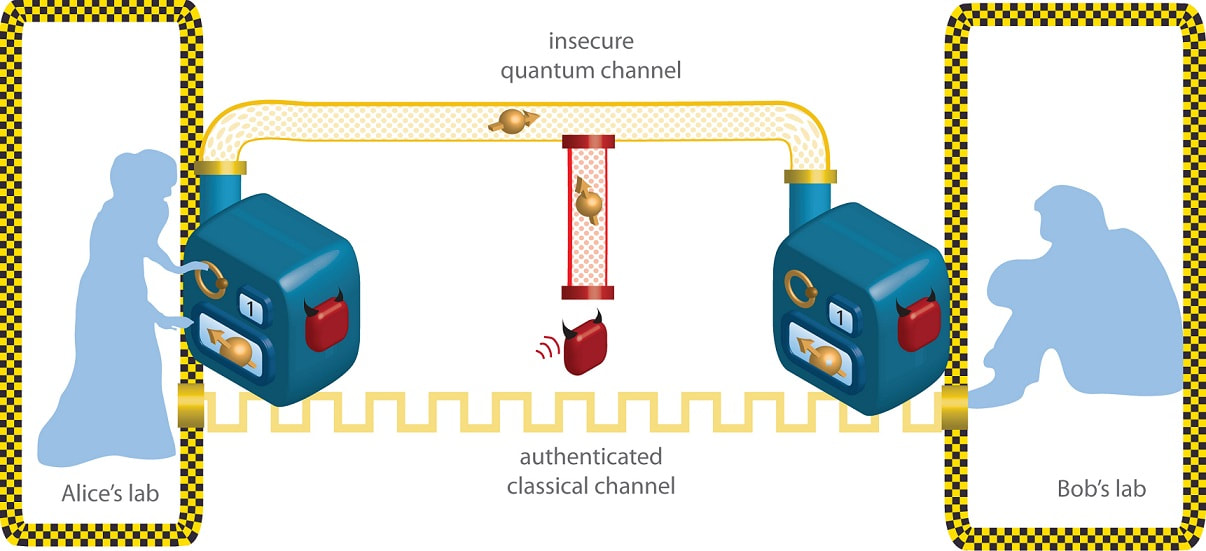

Gamers are becoming more concerned about AR-related health risks as new gaming hardware and software become available. Virtual and Augmented Reality games are very involved and keep players hooked for extended periods of time, which can lead to problems including anxiety, eye strain, obesity, and loss of attention. Due to the immersive nature of AR technology, prolonged use of the AR headset may cause anxiety or stress. Users of AR gadgets are also exposed to dangerous electromagnetic frequency radiation, which can lead to disease in addition to stress. Mice were used in experiments by researchers from the National Toxicology Program (NTP), an interagency federal programme run by the National Health Institutes (US). People who were exposed to electromagnetic radiation may be more susceptible to developing cancer. As a result, using Virtual and Augmented Reality gadgets too often can have negative health effects, which will slow the development of virtual and augmented reality. Regarding the educational usage for technicians and other employees in enterprises, enterprise Virtual and Augmented Reality has other significant applications. Workers will be able to perform more effectively with the aid of head-up displays and smart helmets that can comprehend blueprints and instructions and offer real-time data. For instance, Unilever, a large maker of consumer goods, estimated that the retirement of the company's ageing employees would cause it to lose roughly 330 years of domain experience in one of its European plants. Its manufacturing facility would have lost its domain expertise as a result, and there would have been expensive downtime. Customers' biggest problem is that these technologies are only available in high-end desktops, laptops, mobile phones, and computers. This makes them less accessible to average users, deprives them of newer technical experiences, and makes them deprived. In order to concentrate on the growth, it is important to solve the issue of these augmented reality technologies' limited reach. The hardware and the majority of augmented reality software apps are only enabled on high-end devices, even if the programmes can be used on mid-range devices as well. There is a lot of discussion about "augmented reality" and "virtual reality." The Oculus Quest and Valve Index are two popular VR headsets, and AR apps and games like Pokemon Go are still widely used. They have similar sounds, and as the technology advance, they overlap somewhat. However, these are two quite distinct ideas with features that make it easy to tell one from the other. Both Virtual and Augmented Reality use simulations of real-world environments to either enhance or completely replace them. Using the camera on a smartphone, augmented reality (AR) typically enhances your surroundings by adding digital features to a live view. Virtual reality (VR) is an entirely immersive experience that substitutes a virtual environment for the actual world. In addition, Virtual and Augmented Reality technologies can be used for data visualisation, industrial design in architecture and urban planning, as well as, obviously, for enjoyment; the gaming sector has huge promise in this area (Brey, 1999, 2008; Wassom, 2014). Furthermore, having a conversation with someone who is physically somewhere else but whose virtual representation is in that location in a virtual (VR) or real (AR) setting is already frequent, which could potentially eliminate the need to travel for meetings. Despite all the advantages, readers should be aware that XR technology also introduces a number of intriguing and crucial ethical issues. The ability to interact with virtual characters through XR, for example, raises the question of whether the golden rule of reciprocity should be applied to fictional virtual characters and, with the development of tools that enable more realism, whether this should also apply to virtual representations of real people. The foundation of Cryptocurrency Mining is the decentralisation of transaction oversight. In the process of monitoring transactions, miners—typically individuals—verify the transactions made by other users. To validate the transactions in this process, a powerful computer is required. To encrypt the transactions, hash codes are generated throughout the validation process. The miner needs incredibly powerful and effective gear to produce a hash code. In order to obtain fresh blocks and solve them, miners must produce as many hash codes as they can. Through mining, miners receive benefits. Cryptocurrency Mining rigs come in a variety of sizes and designs. According to the type of processor used, the cryptocurrency hardware market has been divided into categories for GPUs, CPUs, FPGAs, and ASICs. For the creation, transmission, and confirmation of Cryptocurrency Mining transactions, mining is a crucial operation. It guarantees the currency's steady, secure, and safe propagation from a payer to a receiver. Cryptocurrencies operate on a peer-to-peer network and are decentralised, in contrast to fiat currency, where a central authority oversees and controls all transactions. Transactions that occur without the knowledge of stakeholders raise issues connected to lack of transparency, particularly in Asian nations where numerous instances of erroneous or unwanted transactions, such as the deduction of planned costs, are regularly seen. Customers may lose a significant amount of money as a result of this, which could be brought on by human error, mechanical fault, or data manipulation during the transaction process. Additionally, financial institutions typically do not acknowledge their mistakes. The public is unhappy with the current monetary system because of its lack of transparency. The market for cryptocurrencies is not yet governed. One of the main things stopping the adoption of cryptocurrencies at the moment is the absence of laws and the ambiguity surrounding them. Although financial regulatory organisations from all over the world are attempting to develop universal standards for cryptocurrencies, regulatory approval continues to be one of the largest obstacles. Given that distributed ledger technology is still in its infancy, it poses a number of questions for policymakers and regulators on both the national and international levels. Peer-to-peer and remittance transactions without compliance requirements have the potential to be transformed and revolutionised by cryptocurrencies; however, end users must overcome some obstacles relating to security, privacy, and control in order to take use of bitcoin. Because cryptocurrency transactions are stored in the blockchain, a distributed public database, hackers have a huge attack surface to obtain sensitive data. It is possible that duplicating the file will make it simpler for hackers to access the public ledger if it is used to store private information about contracts or payment details. In both a hub-and-spoke arrangement and a distributed database, if a key is compromised, it can be exploited to gain access to the database. One area of finance and economics that has piqued the curiosity of several academic fields is cryptocurrency. This field is being studied by various financial and technological analysts, underscoring its complexity and lack of consensus on many issues. Numerous IT executives and technologists are interested in major cryptocurrencies like Bitcoin, Ethereum, and Litecoin. The process of producing a new coin is known as mining. It is also a crucial part of the development and maintenance of the blockchain ledger because it is how the network validates most recent transactions. In other words, mining is a high-processing-power approach of maintaining records. To secure data from attacks by cybercriminals, cryptography was the process of encrypting it with a key and decoding it at the receiving end. Let's try to define quantum cryptography using the same reasoning: if the method used to encrypt the plaintext uses the quantum mechanics concept, then it is considered to be Quantum Cryptography. It makes use of the "no change theory," according to which an interruption cannot be made consciously or unknowingly. As we all know, a quantum is defined in physics as a packet of energy. It is also crucial to keep in mind that this differs from post-quantum cryptography. Although it seems straightforward, the intricacy resides in comprehending the fundamentals of quantum mechanics that underlie this method:

Quantum Cryptography, as defined, uses quantum mechanics and quantum algorithms to render itself technically unhackable. Let's think about a data transfer between the sender and the recipient. Quantum key distribution (QKD), or a series of photons, is the term for the key used in quantum cryptography to cypher the plaintext and transfer data across an optical fibre line. The parties involved will be able to determine what the key is and whether it has been compromised by any outside parties by comparing measurements of these photons' characteristics. Step- by- Step Process of Quantum Cryptography-

First and foremost, the easiest approach to defend against attacks is by encrypting with longer keys. Although slower and more expensive, longer keys slow down encryption. Another choice is to transmit messages using symmetric encryption and subsequently encrypt the key using asymmetric encryption. Transport layer security (TLS), an internet standard, is based on the same principle. The ideal course of action would be to combine post- Quantum Cryptography method techniques such as lattice-based encryption for early communication and then securely swap keys. Symmetric encryption could then be used to encrypt the main message. The Power Management System (PMS), which controls electrical generators, switchboards, and major consumers, is frequently included in the IAS. Making sure that power capacity is always in accordance with vessel power demand is the main responsibility of the power management system. Even if one of the generators should fail unexpectedly, the PMS makes sure that the load from major users does not exceed the capacity of the power plant. When necessary, the PMS will automatically start and stop backup generators. It may also occasionally reduce load from heavy consumers to prevent overload.

Finding a balance between generation and consumption is the primary goal of the AC MG Power Management System. Controlling the AC bus voltage and frequency is also necessary, especially when operating in the islanded mode. The DGs and ESSs can be modelled as voltage or current sources connected to the AC bus to examine these MGs. The two primary categories of power management strategies are those for islanded mode and those for grid-connected mode. In grid-connected mode, there are two categories of power management strategies: those for dispatched output power and those for undispatched/nondispatched power. The frequency of the system and the voltage of the AC bus are significant system factors that need to be managed. Additionally, the system needs to have an acceptable power sharing arrangement among DERs and take into account the ACMG's generation and consumption balance. The literature has offered a variety of strategies to handle these situations. This group includes the well-known droop-based control approach. In order for the voltage and frequency to remain within a reasonable range, the output active and reactive power of each DER must be calculated. RERs at their MPP are an additional option, but ESSs must manage AC bus voltage and frequency. They can help in-

A Power Management System is built on a network of connected devices and sensors that collect data from critical locations across your electrical infrastructure, from the service entry of your facility to all feeders, all the way down to final distribution and loads. A stand-alone power metering device or a device with incorporated metering functionality, such as a protection relay, breaker trip unit, motor control unit, or variable speed drive, can collect real-time power data. Many of these smart gadgets might already be present in your environment, waiting to be connected and used as a component of a more comprehensive, completely digital solution. Transformers, MV and LV switchgear, generators, transfer switches, power control panels, distribution panels, motor control centers, uninterruptible power supplies, and harmonic filters are just a few of the important electrical assets that can be monitored. A wide variety of data can be continuously gathered around-the-clock to support monitoring and analysis of the health of the equipment as well as real-time power conditions, power quality, and energy efficiency. Through a variety of simple-to-use web applications, such as electrical mimic diagrams, power events analysis, power quality and electrical equipment trends, reports, and dashboards, operational information about the power system is provided with situational awareness in mind. Technology that uses artificial intelligence to comprehend, interpret, and analyse human speech is known as speech analytics, sometimes known as interaction analytics. To evaluate call recordings and transcripts from digital channels like chat and text messaging, contact centres utilise speech analytics. Speech Analytics software's ability to analyse 100% of contacts around-the-clock allows contact centres to be more proactive and have a more precise understanding of what actually occurs during customer interactions. Applications For Speech Analytics Are Well Suitable For Numerous Crucial Tasks Thanks To Their Distinctive Features. Here Are A Few Typical Use Cases For Contact Centres.

The analysis of audio data and the gathering of client information can both be done using speech analytics technologies. This enhances current and future interactions, as well as the general customer experience. Due to the need to improve customer happiness, the contact centre industry is the primary application segment for speech analytics technology. With the use of the customer's speech, this technology may determine emotions, stress, the purpose for the call, and their degree of satisfaction. It can also be used to figure out whether a customer is unhappy or dissatisfied with the service they received. How Does Speech Analytics Work?

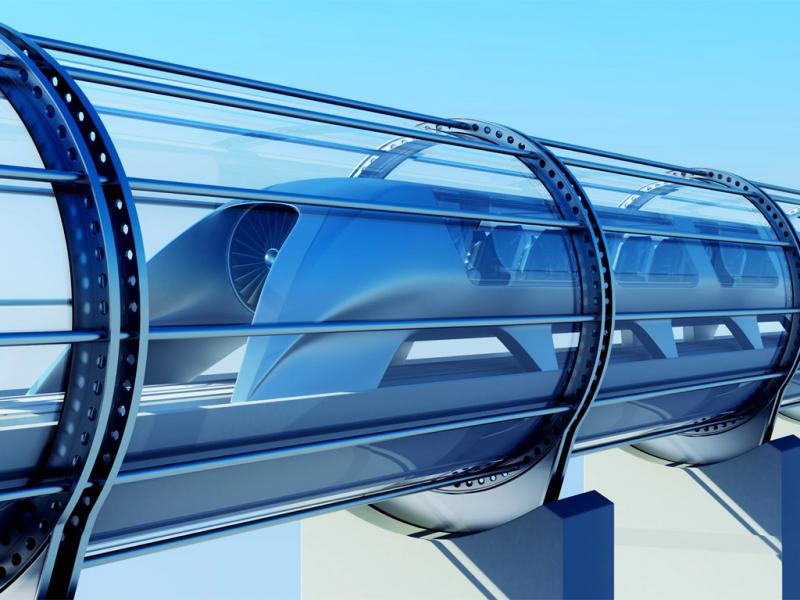

A futuristic mode of transport for people or goods is the Hyperloop. A team from SpaceX and Tesla combined to suggest this technology. It consists of a network of tubes or pods that enable frictionless movement of people and things without any air resistance, minimising the amount of time that people must spend travelling overall. These pods are propelled further by electromagnets and float within the tubes above the rail line. The concept of a vacuum train is used to eliminate outside influences that magnetically pull the transportation system out of evacuated or partially evacuated tubes, allowing the mode of transportation to travel at speeds of up to thousands of miles per hour. The pod will run on its own once it reaches a glide distance of 100 miles. The benefits of Hyperloop Technology include quick transportation, increased security and safety, and more effective infrastructure. Pods will be able to communicate with the system controller more effectively thanks to the onboard environmental controls and localization capabilities, according to the Hyperloop One prototype. Through a network of sensors, these pods will be able to identify obstacles, improving passenger safety. Real-time data and an auto braking sensor will also aid in communication between the motor and the tubes, ensuring greater system uptime for operations and maintenance. Systems and controls will be just as crucial as the physical advancements in tubes and pods for a completely new type of transportation that is faster and has a more robust network topology. As a cutting-edge technology, Hyperloop faces many obstacles on the road to global adoption and commercialization. According to two significant companies working on the Hyperloop, Space X and Hyperloop One, it is anticipated that the terrain and other natural disasters will act as a significant barrier. To understand the air friction and electro-magnets, which is very expensive, design prototypes are being made. Additionally, it is evident that connecting to the pods for the online services related to the Hyperloop will be necessary, which could affect the magnetic field inside the tube and pose another significant challenge for the implementation process. Components of Hyperloop-

In 2012, Elon Musk made his first mention of the Hyperloop. He will start the project by using pressure tubes that are housed in a driven capsule-like structure. August 2013 saw the initial publication of the Hyperloop concept, with the Los Angeles to San Francisco route chosen for a test run that roughly followed Interstate 5 corridors. Many experts have predicted that this project will run over budget, which is to say that it will be very expensive when construction, development, and other costs are taken into account. The Hyperloop Technology is a cutting-edge technology designed for transportation and to transport any cargo to different locations. So a Hyperloop structure is essentially one that is completely packed, has a minimum air pressure (act like a tunnel), allows that tube to travel easily, and is devoid of fiction. People and other items can be transported using the Hyperloop with ease, and it is also a method that uses little energy. When compared to trains and aeroplanes, this would significantly reduce travel times for distances under 1,500 kilometres (950 miles). The Agricultural Robot Is A Specialized Technology That Can Assist Farmers With A Variety Of Tasks6/10/2022 Agriculture Robots are used in agricultural settings to assist with harvesting. Robots can be used in agriculture to sense, act upon, and compute the needs of plants—tasks that are challenging for humans. There are many ways that robots can be used in agriculture, including soil analysis, cloud seeding, weed control, sowing seeds, monitoring the environment, and harvesting. The orange harvester, Rosphere, and Merlin Robot Milker are a few of the robot prototypes in this field. Crop planting, fertilising, and harvesting are all important processes that involve agriculture robots. Robotics facilitate better decision-making by offering thorough data at every stage of agricultural production. Crop quality is monitored using this technology, which also helps to increase production. Horticulture is additionally another significant use of robots in agriculture. Manufacturers are concentrating on releasing goods for use in horticulture. The RV100, for instance, is made by Harvest Automation Inc. and is intended to manage and organise plants, including plant collection, spraying, and monitoring. Robotic fruit pickers, driverless tractors, and driverless sprayers can all be used to automate manual tasks like washing. Agriculture Robots are specialised technological devices that can help farmers with a variety of tasks. They can be programmed to grow and evolve to meet the requirements of different tasks, and they have the ability to analyse, consider, and perform a variety of tasks. There are a huge variety of tasks that agricultural robots can perform for farmers to make their lives easier. Their main responsibility is to complete laborious, repetitive, and physically taxing tasks. Robots are now, however, being used for a variety of specialised tasks that were previously only performed by skilled farmers. Picking out delicate produce, like lettuce and strawberries, is one example of this. Robotics is being used to lessen the physical demands of this task because crop harvesting has historically been a difficult task for farmers all over the world. Although farming is a laborious and repetitive activity that must be done, due to the nature of the work, robots are well suited to step in and take over. The manual dexterity needed to pick different fruits and vegetables has been the only real concern in this situation. Every type of produce has specific needs, which necessitates extensive research and mechanical know-how. Leafy vegetables are prone to tears, whereas fruits are known to bruise extremely easily. The robots that will be assigned to this task need to be programmed with a great deal of precision in order to tackle this problem. Fortunately, a few well-known companies are already filling this huge gap in agri-tech. A robot used for agriculture is referred to as an agricultural robot. Robotics are primarily used in agriculture today during the harvesting process. Weed control, cloud seeding, seed planting, harvesting, environmental monitoring, and soil analysis are examples of new robotic or drone applications in agriculture. Agriculture Robots that pick fruit, drive driverless tractors and sprayers, and shear sheep are all intended to take the place of human labour. Before beginning a task, many factors must typically be taken into account, such as the size and colour of the fruit to be picked. Other horticultural tasks like pruning, weeding, spraying, and monitoring can be performed by robots. Robots can also be used in livestock robotics, which includes castrating, washing, and automatic milking of livestock. These kinds of robots have many advantages for the agricultural sector, including improved fresh produce quality, reduced production costs, and less need for manual labour. They can also be used to automate manual tasks like spraying bracken or weeds when using tractors and other vehicles that require human operators would be too risky for the operators. In the Retail and Manufacturing Industries, Barcode Scanners are Quickly Gaining Popularity.2/8/2022 A Barcode Scanner is an electronic device that reads information encrypted in the form of coded bar lines on a computer. In general, it is used to capture the information marked on the product. In general, all barcode readers have circuitry that analyses data provided by sensors. Barcode scanners are made up of a light source and sensors that capture encrypted data. 2D barcode scanners are the most popular and fastest-moving barcode scanners on the market. Laser scanners, penta-type scanners, camera-based readers, and charge-coupled devices are among the technologies used in Barcode Scanners (CCD). Barcode Scanners are increasingly being used in various aspects of the healthcare industry. Tracking exact patient records with barcode scanners has become sophisticated, with the rectification of every possible error and the reduction of drug-related mistakes during a patient's hospital stay. Furthermore, according to IMNA, barcode scanner technology can correct approximately 51% of medication errors. According to the United States Food and Drug Administration (FDA), each drug is assigned a National Drug Code, which is a 10-digit identification number that allows drug information to be easily captured and stored. Barcode Scanners are rapidly becoming popular in the retail and manufacturing industries. It has evolved into one of the most effective solutions for gathering product information. Labels with barcodes help to record information such as product count, date of manufacture, date of expiry for perishable products, selling price, date of supply to retailer, and so on. Barcodes are printed on all products sold by major retailers such as Walmart, Costco, Carrefour, and IKEA. Types of Barcode Scanners-

|

Categories

All

|